What Is "Abandonware" and Is It a Security Risk?

The open-source ecosystem is vast and replete with projects at all stages of development. There are nascent projects that are just getting started and toy projects that were never really intended for production use. There are, of course, also high quality and well-maintained projects relied upon by many dependent projects. But sometimes, often even, these projects gradually (or not so gradually) fall into a state of neglect, becoming “abandoned.” This is especially common in the open-source world where most developers are not compensated for their work.

Often too, these projects are understaffed with just one or two maintainers and therefore do not really pass the “Bus Test.” Given all the things that could happen in life, it is easy to see how projects could unexpectedly become effectively unmaintained in a brief period. Developers or maintainers might simply become overburdened by or just bored with the projects they manage and lacking any financial incentive to support them and thus give them less attention than they otherwise would. In either case, identifying abandonware is not necessarily straightforward.

The most naïve way to go about identifying likely abandonware candidates would be simply to look at the most recent commit to version control, which is the last time a developer made a change to the project’s code. Naturally, if that has not happened in months, or years, then there is a good chance that the project has been effectively abandoned. However, this isn’t necessarily true. If a project is extremely limited in scope and well-vetted, changes to the code might not be necessary. For example, a project that implements a parser for a well-established and fixed network protocol or document format could be in a state where it requires very little maintenance, if any.

Alternatively, the fact that a project has recent commits, even quite a few of them, does not necessarily mean that it is well-maintained from a security standpoint. It is entirely possible that although new features are being rolled out (simultaneously increasing the surface area for attack), security- and dependency-related updates might be ignored.

What else can be done to find projects whose level of maintenance leaves something to be desired? You need a way to measure not just developer output, but developer responsiveness.

The open-source software development process is typically based on a distributed model of development. In other words, in a typical open-source project, a great deal of development is driven by the users of that software in the form of submission of issues (i.e. items requiring attention or bugs) and pull requests (PRs), which are updates to the code often made by non-core developers and submitted for approval and eventual inclusion into the core project code by the project maintainers. To gain a reasonable approximation of developer responsiveness, it is useful to look at the proportion of these issues and requests that are not addressed, as well as how long they tend to remain unaddressed.

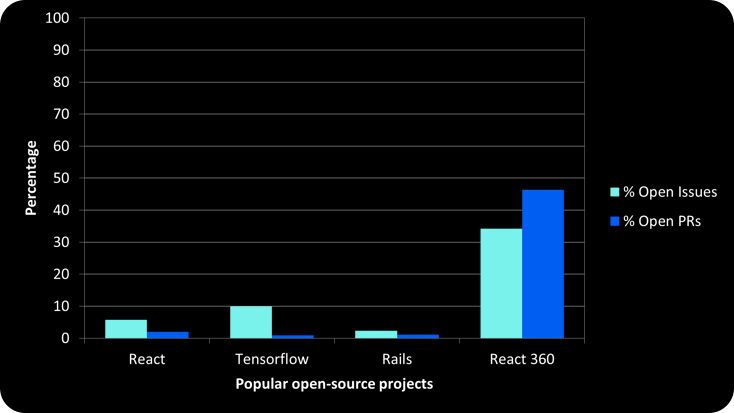

Certainly, some bug reports and PRs submitted might be invalid or undesired, but even in this case, a diligent maintainer would typically mark them as such and close them so that they do not remain in an open status indefinitely. Consequently, most well-maintained projects tend to have an extremely low number of open issues, at least with respect to the overall number of issues that have been submitted across the entire lifetime of the project. The table below shows the respective values for these metrics for several very popular open-source projects as of the date of this writing. Three of these projects are actively maintained, while the fourth (React 360) is an archived project and thus, almost by definition, in an abandoned state.

Figure 1 – % Open Issues and PRs for popular open-source projects

By considering measures such as these along with the timeline of commits to the repository and applying appropriate weighting and thresholds, abandoned projects can be fairly reliably identified. But what is the upshot? Many projects that rely on open-source libraries may have hundreds or even thousands of dependencies. Surely there will be some that are no longer actively maintained. Does that necessarily imply a critical security risk? No, but by combining measures like a project’s abandonware “score” with other relevant data points such as vulnerability data, it becomes possible to build a security profile for software dependencies that can indicate the current risk level and also whether these issues are likely to be resolved soon, if ever.

The multiple indicator analysis approach is core to how Phylum identifies risk in the massive dataset of open-source software. There are few cases where a single indicator correlates to a critical security issue, but the combination of multiple indicators can be effective in identifying threats to the open-source software supply chain. This also allows Phylum’s analysis to simultaneously look for a variety of conditions while maintaining a low frequency of false positive events.