A Spooky Occurrence in the Open-Source Ecosystem: Hacktoberfest 2020

One of the things that excites me about the open-source software ecosystem is entirely outside the technical components of code and computation. Instead, as someone whose PhD was focused on behavior and networks in social systems—ant colonies, bee hives, social media, etc.—I find the social element of open-source software to be the most intriguing.

Much like the advent of social media, open-source software is as much a technological innovation as it is a social one. Open-source software is entirely driven by a large community of pro-bono developers who collaborate to create and update the software that now underlies much of our most important technology! With this social core of open-source, some have naturally begun trying to recruit new members to this grassroot development community.

In this blog post, I want to explore a controversy that surrounds a prominent open-source software recruitment event: the 2020 edition of Hacktoberfest. Run every October by cloud computing company DigitalOcean, Hacktoberfest is an annual event aimed at encouraging participation in the open-source community. The premise is simple: during the month of October, participants are tasked with submitting pull requests (PRs)—essentially, a set of changes to an open-source software project that is then reviewed and potentially accepted by the project’s maintainers—to open-source projects on GitHub and GitLab. Participants who create four pull requests that are accepted get a prize, a free t-shirt.

While the intentions of Hacktoberfest are admirable, Hacktoberfest 2020 gained the ire of many members of the open-source community. Perhaps due to a perfect storm of better advertising and a historic pandemic that has many sitting at home, Hacktoberfest 2020 saw far more participants than previous years. While that would seem to be a great outcome, many software maintainers accused Hacktoberfest of creating a deluge of low-quality pull requests that quickly overwhelmed open-source projects. Some even said that Hacktoberfest 2020 actively harmed the open-source software ecosystem.

From our perspective at Phylum, the controversy surrounding Hacktoberfest 2020 is highly relevant to our mission of securing the open-source software ecosystem. Highly used software might gain security vulnerabilities through low-effort code contributions written by those who simply wanted to get a t-shirt as quickly as possible. Worse yet, bad actors might try to use the massive influx of new contributions as cover to get malicious code contributions approved in important projects that are frequently used and relied upon.

Here, we attempt to cut through the controversy and take a quick look at what really went down in Hacktoberfest 2020.

Did Hacktoberfest 2020 shift the open-source software ecosystem?

We looked at the behavior of authors who committed code changes—both pull requests to larger projects and direct changes to projects they own or maintain—during October 2020. While not perfect, this approach allows us to reasonably identify people who participated in Hacktoberfest.

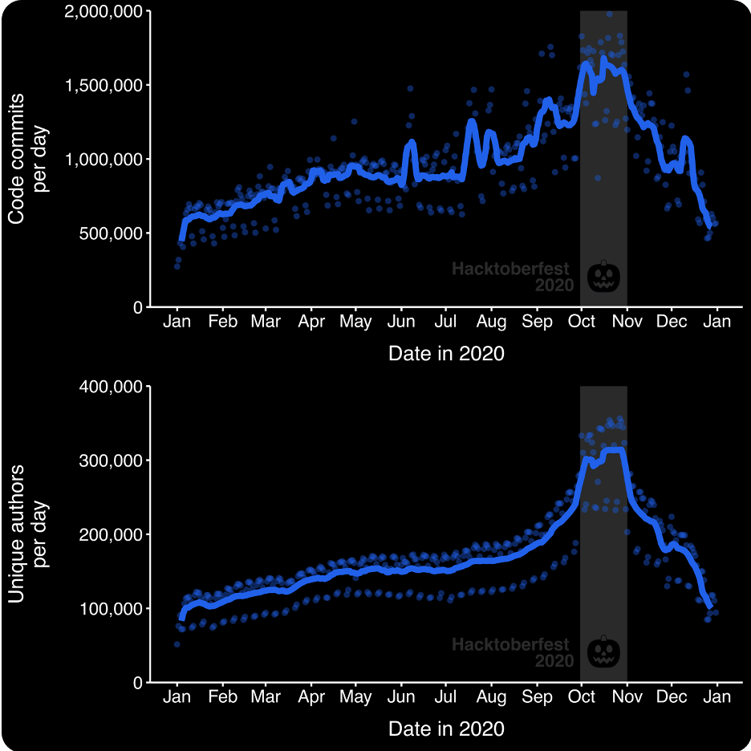

Sure enough, we can easily spot the impact of Hacktoberfest on these authors. In Figure 1, we see that these authors collectively contributed the most changes that year during October. Throughout October 2020, an average of over 1.5 million commits were made each day, a huge increase over the 0.5-1 million average daily commits we saw early in the spring of 2020!

Of course, Figure 1 also shows us that Hacktoberfest accomplished its goal of encouraging participation in the open-source community. October 2020 saw a large increase in the number of unique authors contributing each day: throughout the month, an average of over 300,000 users contributed each day. So along with the spike in commit volume, we also saw an increase in author volume.

Figure 1 – Analyzing the behavior of authors who contributed code in October 2020. (TOP) The number of daily code commits by these authors on Github throughout 2020. Line represents a 7-day rolling average. (BOTTOM) The number of unique authors committing code on Github throughout the year.

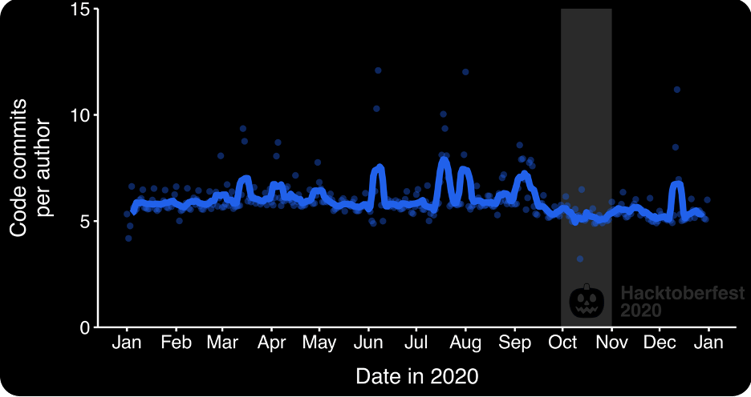

Interestingly, despite Hacktoberfest, authors did not change the number of daily commits that they pushed. In Figure 2, we see that authors consistently submitted an average of ~6 commits per day throughout 2020. Thus, instead of seeing authors do more commits in response to Hacktoberfest, we simply saw more authors throughout October. So can we see anything about their behavior that did change?

Figure 2 – The number of code commits per author each day throughout 2020. Line represents a 7-day rolling average.

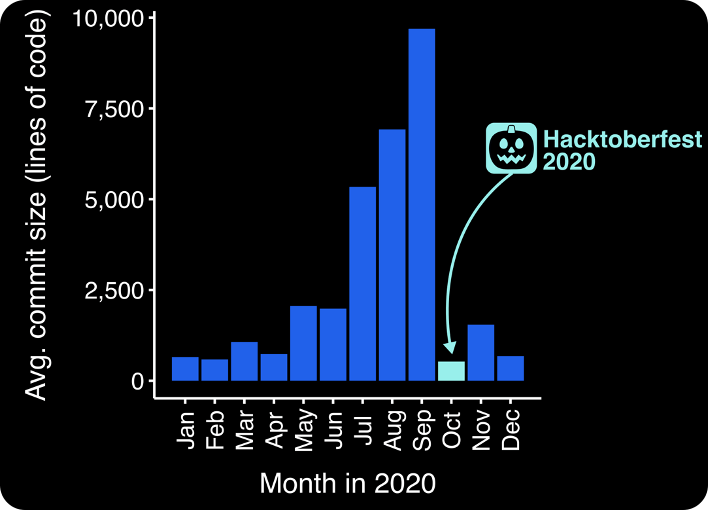

If we look at the size of changes that authors were submitting with each commit, we do see a notable shift in author behavior during Hacktoberfest 2020. Using Github’s API, we sampled approximately 1,000 random commits from each month throughout 2020 and measured how many lines of changes (both additions and deletions) authors committed each time. In Figure 3, we see that there was a sharp decrease in the average commit size in October 2020 when compared against the previous summer months. However, looking at the median size of commits each month, we see a more modest decline from 10 lines throughout the summer to 9 lines in October.

Taken together, this suggests that during Hacktoberfest authors were omitting large changesets commits (e.g., pushing an entire project or entire script) in favor of more standard contributions. We can infer this because statistics tells us that a sharp decrease in the mean without a change in the median suggests that the upper tail of the distribution has shrunk. Therefore, users during Hacktoberfest were no longer pushing the super large commits that might come with their own personal projects and instead stuck to more modest commits, perhaps suggesting that authors were really opting to try to make contributions to existing open-source projects.

This is supported by anecdotal evidence from frustrated software maintainers on Twitter. There are numerous articles documenting the less-than-desirable side effects of the event. Many of these maintainers note that contestants sent PRs that changed the case of some words in a README file or modified the pluralization of words in documentation. These were not meaningful contributions to the project, but as contributors learned to game the system to “win” at Hacktoberfest, these approaches spread quickly on YouTube and Twitter to other people. This amplification caused an overload of new, low-quality PRs for maintainers who were already donating time to their project (and are almost always uncompensated for this work). The observed change in commit size is likely in part due to this gamification of the event.

Figure 3 – The average size of a commit—the total number of lines of code added and/or deleted—over time in 2020. We sampled ~1,000 random commits each month from our authors of interest.

Did Hacktoberfest 2020 pose a security risk?

At Phylum, we’ve developed a machine learning model that can detect unusual behavior among authors of open-source software by analyzing commit times. The model learns the behavior of an author, establishing a baseline understanding of “normal” behavior—e.g., when they tend to be most active in committing changes. Then, the model can flag commits that appear “unusual.” Anomalous commits are important because they might indicate malicious behavior, like a compromised account that has been taken over by a bad actor. This model is one layer of a set that is used to identify risk indicators that drive Phylum’s Author Risk Score, one element of the scoring mechanism we use to communicate the risk in using open-source software.

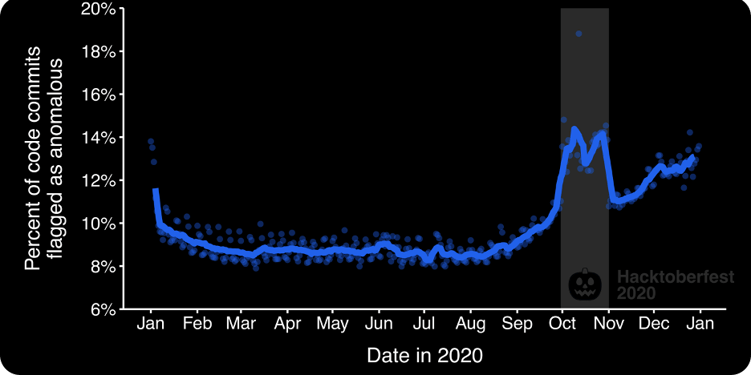

Turning our machine learning model onto our authors of interest, we see a large spike in anomalous commits during Hacktoberfest 2020! As we see in Figure 4, approximately 14% of daily commits appeared to be unusual behavior—an increase of 4 percentage points from the preceding summer. Some of these commits are probably getting flagged because our model learns both (a) when authors tend to commit and (b) which projects they usually contribute to. Therefore, Hacktoberfest likely encouraged a lot of authors to send PRs to projects they’d never interacted with before, causing our model to become highly suspicious of their behavior. After all, the model doesn’t know that Hacktoberfest is a thing!

Figure 4 –In October 2020, we see a large increase in the number of daily commits that are flagged as anomalous. Line represents a 7-day rolling average. We used our anomalous author behavior ML model to detect unusual behavior among Github users.

Still, the fact that Hacktoberfest led to an increase in anomalous commit behavior hints that it might pose a broader security threat. By encouraging authors to interact with projects they otherwise never would, Hacktoberfest might unintentionally make it easier for bad actors to hide and escape detection by more sophisticated methods, like our time-based behavioral ML model. Moreover, even large OSS projects have maintainers that become familiar with the work of a set of developers that contribute frequently. It’s an open question if challenging that familiarity is a positive or a negative. As for potential risk of a given commit during Hacktoberfest, we cannot yet say which were harmful because we’re still working on that data. We’re teaching the system to understand commit diffs and to more rapidly understand how contributors affect the security posture of open-source projects. This is germane to our mission to secure the universe of code, and fortunately that will also help this analysis!

Parting thoughts

Ultimately, I won’t make a value judgement on the Hacktoberfest 2020 controversy. To their credit, DigitalOcean quickly made changes to try to make future events less spammy and overwhelming for software maintainers. Overall, we can say that Hacktoberfest did seem to accomplish its top-line objective: in response to its simple incentives (a t-shirt!), large amounts of users suddenly committed code to open-source projects.

That said, as with other blog posts here at Phylum, this case study highlights the dual nature of the open-source software ecosystem. On one hand, events like Hacktoberfest can bring an influx of new and potentially innovative users into the open-source community, likely benefiting us all with more and better software. On the other hand, the sudden influx of users might allow bad actors to better slip in undetected, potentially putting people downstream—i.e., the users of open-source software—at risk.